The 2026 Brand Safety Playbook for Influencer Marketing

Australian influencer marketing brand safety in 2026 — ACCC enforcement, AANA disclosure, fraud detection and the verification stack that keeps brands clear of fines.

By Donkey Dan, edited by Dr Brent Coker

Influencer marketing in Australia stopped being a low-stakes channel in March 2026, the moment the ACCC handed Photobook Shop a $39,600 fine for hidden influencer deals.1 That single ruling moved disclosure liability off the creator and onto the brand commissioning the content — and it changed what “brand safety” means for every marketing team running paid creator campaigns. This playbook is what we wish every brand had on their desk before their next campaign brief: the regulators who matter, the fraud signals that actually predict trouble, the AI threats that bypass legacy vetting, and the workflow changes that turn compliance into an operating standard rather than a panic afterwards.

We’re going to be specific. Australian rules, Australian dollars, real precedents, real fraud benchmarks. Where we make a claim, you’ll see a numbered citation pointing to a source link at the bottom.

What does brand safety actually mean in 2026?

Five years ago, “brand safety” mostly meant making sure your YouTube pre-roll didn’t run next to extremist content. In 2026, the term has expanded into something much closer to procurement-grade due diligence. For an Australian brand running a paid micro-influencer campaign, brand safety now covers six distinct surfaces:

- Australian Consumer Law exposure — misleading or deceptive endorsements, enforced by the ACCC

- Self-regulatory disclosure — the AANA Code of Ethics Section 2.7 and the AiMCO Influencer Marketing Code of Practice

- Vertical regulators — the TGA for therapeutic goods, ASIC for financial products, ABAC for alcohol

- Audience authenticity — bot followers, engagement pods, and audience demographic fraud

- Synthetic content threats — AI-generated creators, deepfaked endorsements, and unauthorised likeness use

- Reputational drift — past creator behaviour or current audience toxicity that becomes the brand’s problem on association

Every one of those surfaces is currently producing real penalties, real cancelled campaigns, or real public-relations damage in the Australian market. Most brand-safety guides lump these into a single checklist; the rest of this playbook treats them as separate disciplines, because they require separate controls.

How the ACCC turned a 2023 sweep into a 2026 fine

The Australian Competition and Consumer Commission flagged digital-economy integrity as a top compliance and enforcement priority for the 2024–25 cycle, listing it alongside supermarket pricing and essential services.2 That was not an idle declaration. In early 2023 the ACCC ran an internet sweep of 118 high-profile influencers across Instagram, TikTok, Snapchat, YouTube, Facebook and Twitch. The headline finding: 81% of the reviewed influencers were posting content that raised concerns under the Australian Consumer Law for potentially misleading advertising.3

The vertical breakdown was uncomfortable reading for any brand operating in those categories:

| Sector | Non-compliance rate | Most common issues |

|---|---|---|

| Fashion | 96% | Undisclosed gifting, hidden affiliate links, seeded products presented as personal purchases |

| Beauty & cosmetics | High | Misleading efficacy claims, hidden sponsorships, undisclosed cosmetic procedures |

| Home & parenting | High | Undisclosed commercial relationships, fake authentic-usage scenarios |

| Gaming & technology | 73% | Vague disclosure terminology (“partner”), undisclosed hardware gifting |

The 2023 sweep was educational — no fines, just findings. March 2026 is when the ACCC switched modes. Photobook Shop (trading as Tomsen Consolidate) paid $39,600 in two infringement notices for: (a) commissioning influencers on 107 occasions between August 2024 and September 2025 with contracts that explicitly told creators “please ensure that your videos do not mention that the product is free, sponsored, or that PhotobookShop contacted you to create them in exchange for products”; and (b) editing an influencer’s review of an AI assistant tool to remove the words “a bit fiddly” and “confusing”, thereby altering the overall impression to mislead subsequent consumers.1

ACCC Deputy Chair Catriona Lowe was direct about what this means: the Australian Consumer Law applies to the digital world with the same rigour it applies to brick-and-mortar retailers. The provision of free goods is treated as a commercial relationship equivalent to monetary payment.

If you’ve been quietly running gifting campaigns without enforcing disclosure, the practical implication is uncomfortable: every undisclosed gifted post sitting in your creator-relations CRM is now legacy exposure. The fine is the new floor, not the ceiling.

AANA, AiMCO and why “gifting doesn’t need disclosure” is wrong

Statutory consumer law sits next to two self-regulatory frameworks that brands routinely under-read.

AANA Code of Ethics, Section 2.7. Mandates that advertising must be clearly distinguishable as such, applied to any marketing communication over which an advertiser exercises a reasonable degree of control. That phrase explicitly captures user-generated content where the brand has paid, gifted or otherwise arranged the post.4

AiMCO Influencer Marketing Code of Practice. Operates under the Audited Media Association of Australia and codifies the gifting position the industry has been resisting for years: gifts and value-in-kind are equivalent to formal payment for disclosure purposes. The only narrow exception is when a product comes from an entity with zero prior or expected commercial relationship to the brand and the creator chooses to post purely out of personal affinity.5

The Ad Standards Community Panel — which adjudicates complaints under the AANA Code — has formally rejected ignorance as a defence. After several years of public-facing AiMCO guidance and Ad Standards explainers, “we didn’t know” is no longer credible.

What this looks like in operational terms is a small set of contractual non-negotiables that need to be in every creator brief from now on:

- Required hashtag and exact placement (in-caption, above the fold, not buried after a wall of text)

- Platform-native partnership tag (Instagram’s “Paid partnership with…” label is mandatory where available)

- Spoken disclosure in the first three seconds of any video, paired with on-screen text for sound-off viewers

- A specific termination clause for non-compliance with ACCC, TGA or AANA disclosure mandates

The vertical regulators that quietly issue the biggest penalties

Every brand-safety guide talks about the ACCC. Far fewer talk about the three Australian regulators that operate in higher-stakes verticals and that have been issuing penalties at a serious pace.

Therapeutic Goods Administration (TGA). Promotion of prescription medicines, medical devices, vitamins and cosmetic injectables sits under the Therapeutic Goods Act 1989. In 2024–25 alone the TGA requested removal of more than 13,700 unlawful social-media advertisements.6 Influencers cannot advertise prescription medicines, biologicals or unapproved goods at all. And — this is the part most brands miss — if a brand posts compliant content but a consumer leaves a comment with an unsubstantiated efficacy claim (“this lost me 50 lbs in two weeks!”), the brand bears the legal responsibility for moderating and removing it. Comment moderation is a compliance task in this vertical, not a community-management nicety.

Australian Securities and Investments Commission (ASIC). The “finfluencer” wave is now actively policed. In 2025 ASIC took targeted action against 18 suspected unlawful finfluencers; in April 2026 the regulator joined a Global Week of Action with 16 other jurisdictions.7 AFS licensees who authorise finfluencers are being formally reviewed for whether they’re supervising representatives properly. The supply-chain principle is the same as Photobook Shop: the regulator is willing to chase the corporate entity at the top of the chain, not just the loudest voice on social media.

ABAC Responsible Alcohol Marketing Code. Alcohol brands engaging influencers must verify the creator is 25 or older and that more than 80% of the audience is 18+ where platform analytics permit. Creative cannot position alcohol as a mood enhancer, therapeutic solution, or pathway to social or professional success. Most alcohol brands assume their agency is enforcing this; in our experience, the gap between assumed enforcement and actual enforcement is the #1 source of creative rework.

If your campaign touches any of these verticals, the operational answer is the same: vertical-specific clauses in the creator contract, a verifiable age check on the creator, and a moderation calendar for comment monitoring.

The fraud problem most brands are still under-pricing

Influencer fraud is the part of brand safety where the gap between marketing-team awareness and actual financial exposure is widest. Industry estimates put global influencer fraud losses between $1.3 billion and $4.6 billion annually.8 Specific 2025 audits of large samples of influencer accounts have produced fake-follower or artificial-inflation rates of 14.2% (Digital Applied), 37.2% (SociaVault, 100,000-account sample), and roughly 30% (Influencer Marketing Hub).9 Even the conservative end of that range means roughly one in seven creators you might shortlist has measurably fake audience data.

The detection signals are well-understood; the discipline of actually checking them before signing a contract is what’s missing.

- Engagement velocity curve. Real engagement trickles in over 24–48 hours as different time zones come online. Pod-driven engagement spikes vertically in the first hour and flatlines.

- Action discrepancy. Hundreds of comments on a post with zero saves, zero shares and zero click-throughs is coordinated activity, not real attention. Story-view-to-post-engagement ratio is another tell — a creator with 100,000 followers, 200,000 likes per post and 500 daily Story views is showing you a complete disconnect.

- Semantic repetition. Pods comment fast and shallow — fire emojis, one-word praise, generic platitudes that could apply to any image. Real audience comments carry context, references to prior posts, in-jokes.

- Geographic mismatch. A Sydney or Melbourne creator with an audience heavily skewed toward markets where they have no logical relevance is acquiring followers, not earning them.

- Backend analytics refusal. Authentic creators share their platform-side analytics screenshots willingly. Refusal, delay or excuses from a creator at this step is, in our experience, the single most reliable red flag.

The hub-level point is that fraud detection cannot live downstream of a contract. Once you’ve paid, you’ve lost any practical recourse.

For the in-depth view of why trust is breaking down faster than the industry’s response — the consumer-trust data, the AI-content backlash, the macro-vs-micro shift — read our companion on why trust is getting harder for brand-side influencer marketing in 2026. It’s the strategic argument that this playbook operationalises.

The synthetic threat: AI personas, deepfakes and the audience-confidence gap

The next wave of brand-safety risk is already arriving, and most marketing teams are under-preparing for it. Generative AI now produces hyper-realistic synthetic personas that can accumulate large followings and pass surface-level vetting because they tick every taxonomy box automated systems use to classify “real talent” — face, voice, dynamic environment, scripted-but-human-sounding behaviour.

The risk profile is two-fold. First, brands inadvertently contracting with covert synthetic personas — paying real money for engagement from an entity that doesn’t exist. Second, malicious actors using deepfakes to hijack legitimate creator likenesses to endorse products the creator never agreed to. The 2025 Molly-Mae Hague deepfake perfume scam on TikTok is a representative example — an unauthorised AI-generated likeness pushed a product to her audience and the legal mess took months to clean up.

Australian consumers are not as good at spotting this as they think. Research from the Commonwealth Bank found that 89% of Australians believe they can spot an AI-generated scam, but in empirical testing they were correct only 42% of the time — statistically worse than a coin flip.10 If your customers can’t reliably distinguish synthetic from authentic, the obligation to do so falls back onto you.

Practical defences for the contracting stage:

- A live video call with any creator before contracting (simple, and a fully synthetic persona cannot do it cleanly)

- Requirement to share backend platform analytics from the creator’s authenticated platform login

- Contractual clauses prohibiting unauthorised synthetic content and reserving the right to require provenance metadata on submitted assets

- Manual heuristic review of submitted video for kinematic inconsistency, audio-visual desynchronisation, and lighting that doesn’t match the environment

This is not paranoia. It’s procurement hygiene catching up with a technology curve.

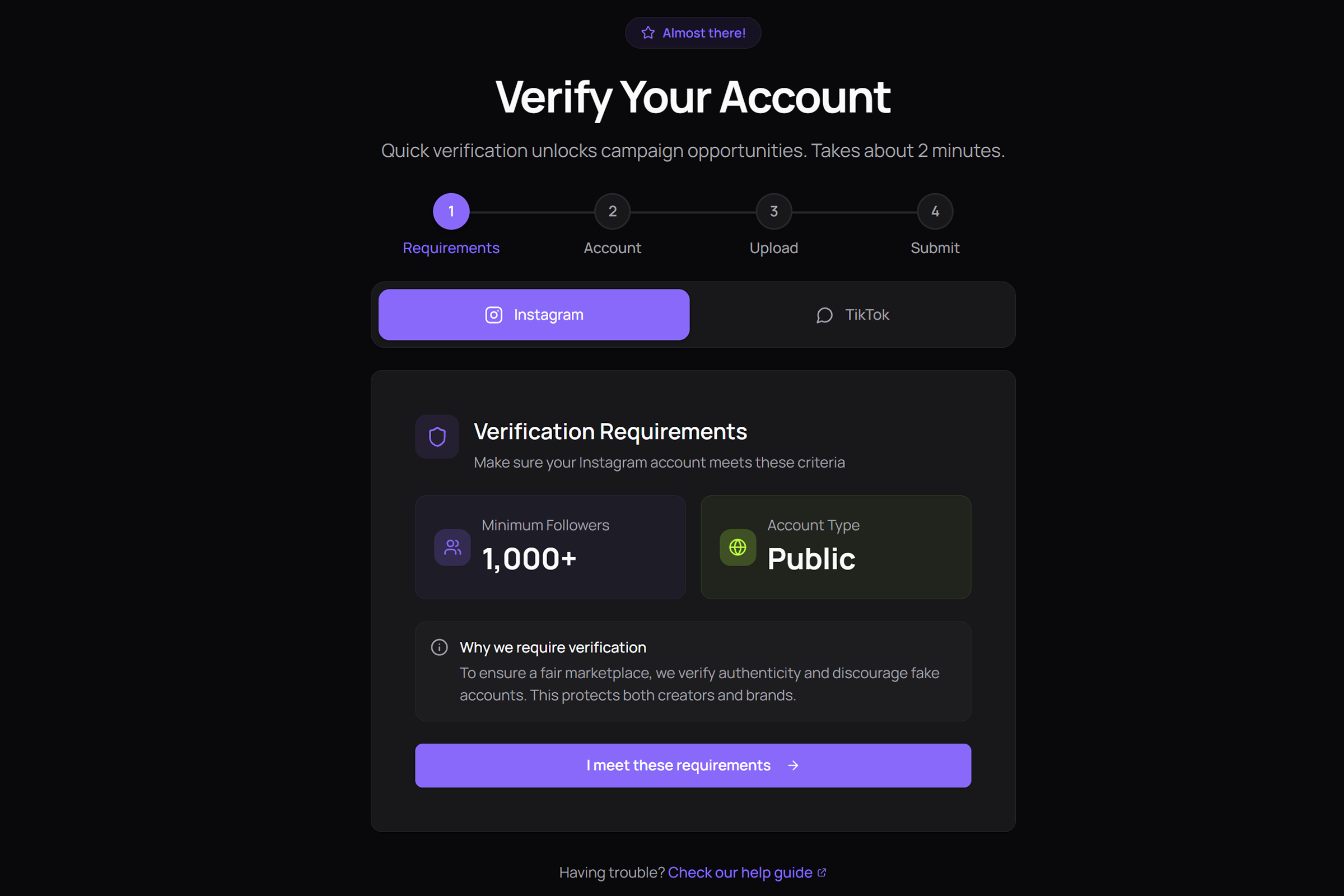

How verification works as an operational floor, not an upgrade

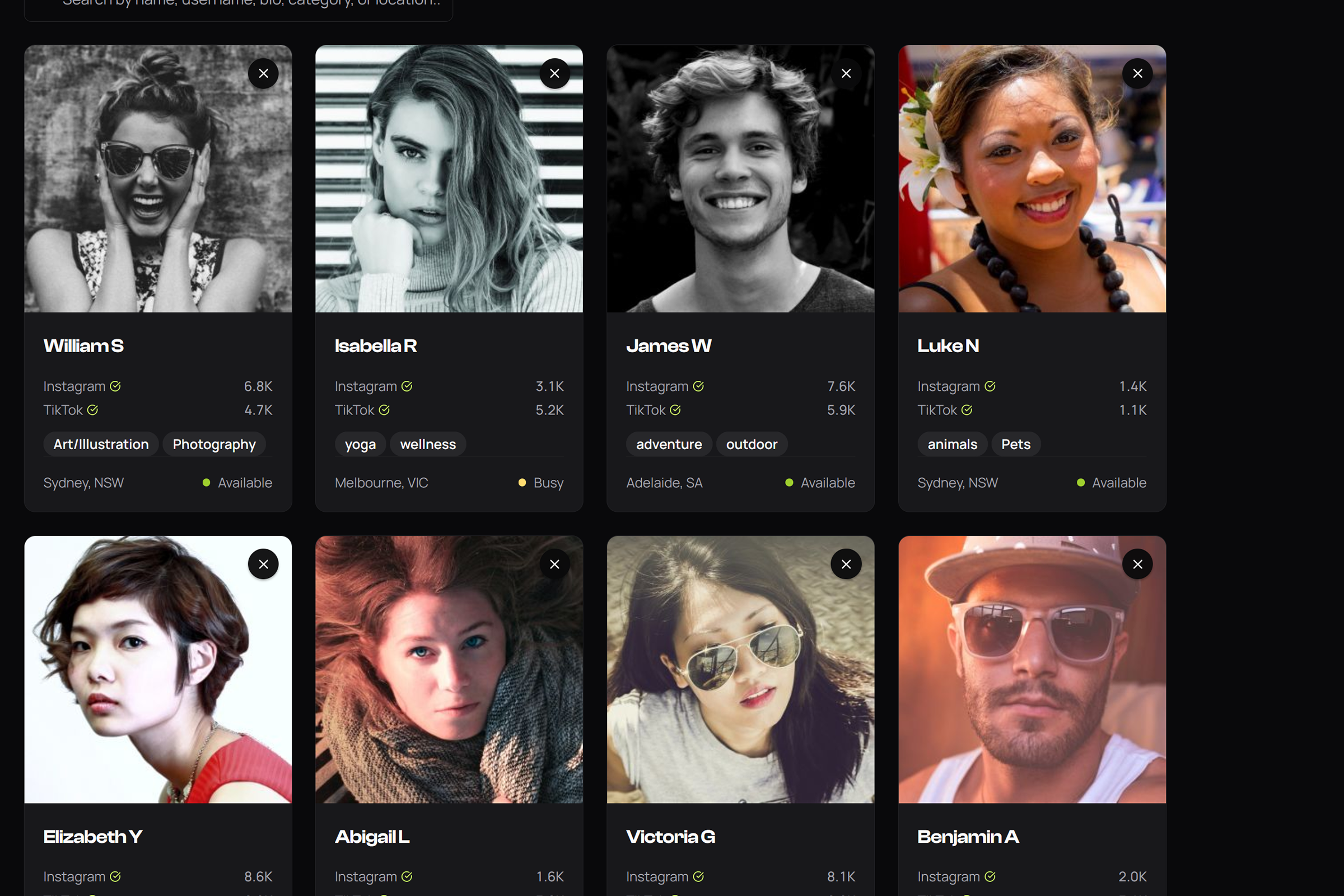

Most platforms treat creator verification as a premium feature you pay extra for or a badge a creator can apply for. We made a different architectural call when we built Mega Donkey, and it’s the proprietary observation that drives most of our brand-safety work: verification is the floor of the marketplace, not an upgrade tier.

Every creator on our marketplace passes AI verification before they can apply to a single campaign. The verification layer checks:

- Follower count — minimum 1,000 to enter the marketplace

- Engagement rate — minimum 2% sustained, not a single viral outlier

- Following ratio — flags accounts with statistically improbable follow patterns

- Post history — establishes a continuous, organic posting record

- Bot and pod detection — pattern-matches engagement velocity, comment semantic repetition, and audience-action discrepancy

AI analysis completes in 2–5 minutes. Admin review follows within 24–48 hours. Only verified creators appear in brand-side search and discovery. That single design decision means the brand-safety conversation starts from a floor that, in legacy DM-based outreach, you would have to reach by yourself for every campaign.

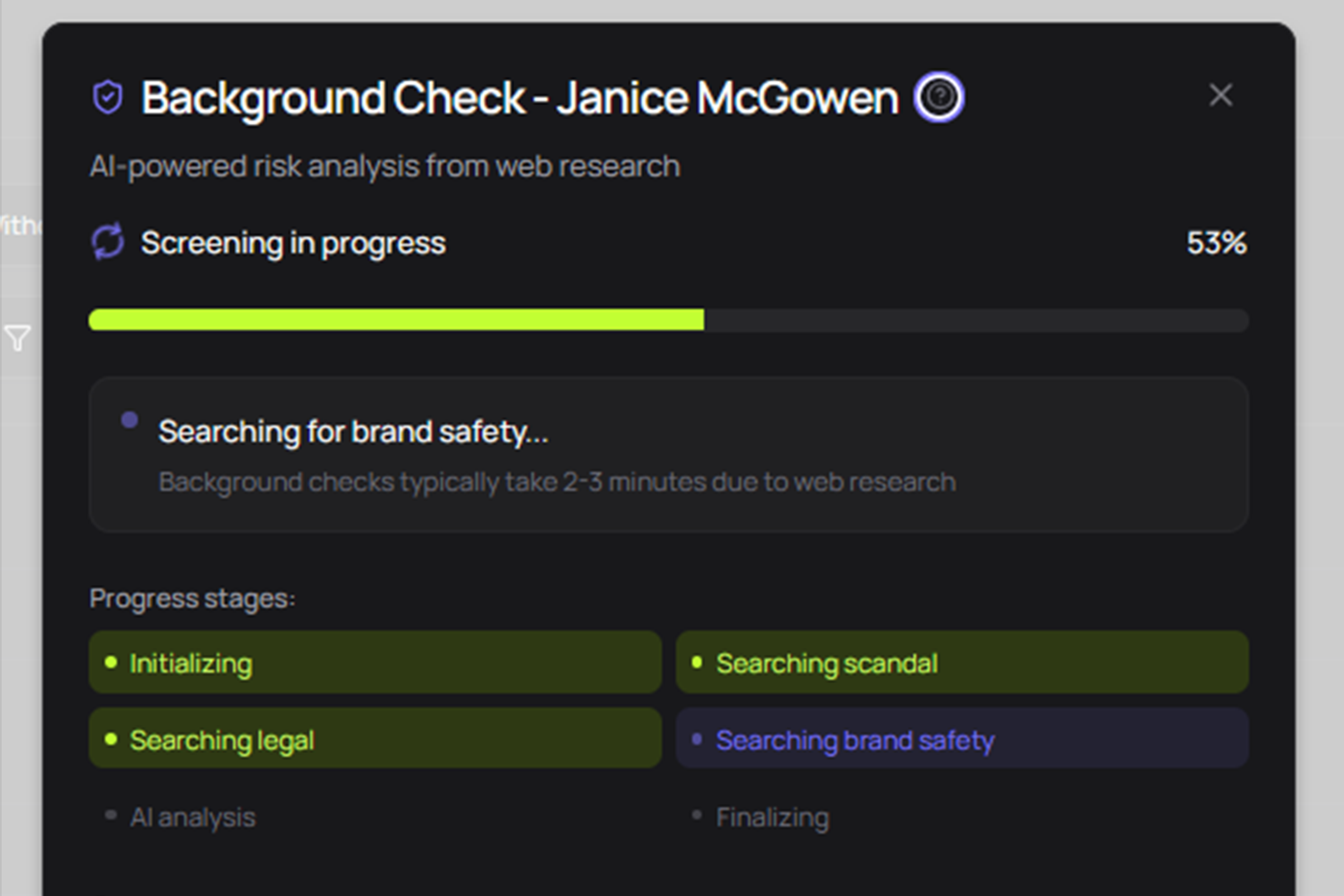

Sitting on top of verification is Safety Check AI — a separate brand-side step that runs before any contract is signed. It uses web intelligence and Claude AI analysis to scan for past scandals, legal issues, controversial content and industry-specific risks across each shortlisted creator, returning a colour-coded severity finding with a source link and confidence score for each item.

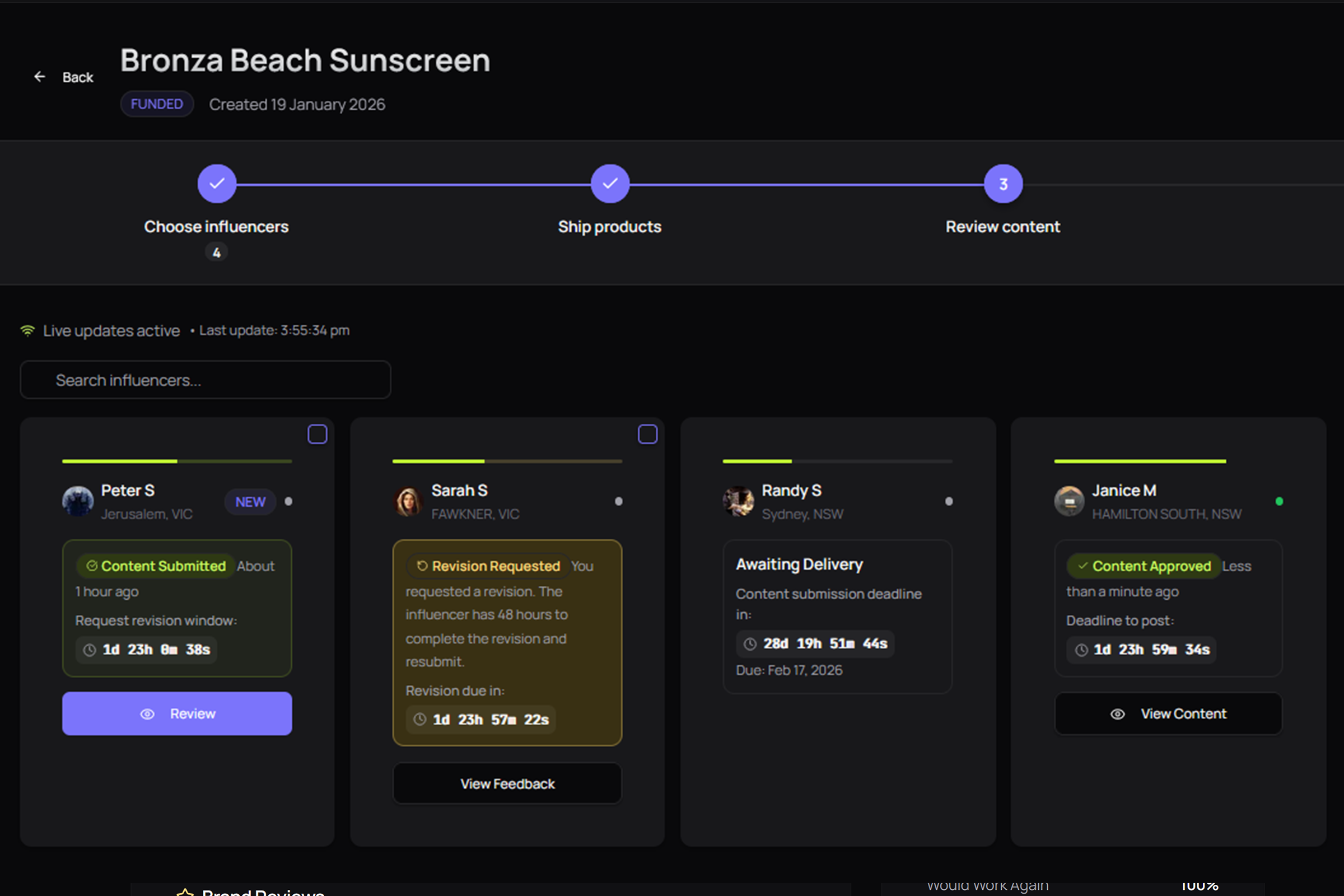

Two compliance gates that brands would otherwise have to build themselves are now part of the workflow by default.

The third layer is the Trust Score, a portable, audited reputation that follows the creator across every campaign. The score is calculated from five weighted factors: Quality (40%), Reliability (25%), Satisfaction (15%), Communication (10%), and Experience (10%). Verification status and earned badges add bonuses. After every campaign, brands leave structured reviews that feed back into the score, including a “would work again” indicator and detailed scoring on Communication, Quality and Professionalism. That feedback loop matters because — for the first time in this industry — a creator’s reputation is something a brand can audit before contracting, not after.

Disclosure as a feature, not a chore

There’s an antiquated assumption inside many marketing teams that visible #ad disclosure depresses engagement, so the natural instinct is to hide it. That assumption is now both legally indefensible and empirically wrong.

Today’s audiences — particularly millennials and Gen Z — have high media literacy. They understand the creator economy is a paid economy. What they react to is being deceived. When an undisclosed sponsorship is later exposed, the betrayal of the parasocial relationship damages the credibility of both creator and brand. Clear, upfront disclosure does the opposite — it signals professionalism and respects the audience’s intelligence.

In our content review workflow, disclosure compliance is an explicit approval-gate criterion, not a post-hoc audit. Brands sign off on submitted content with the disclosure verified at the same moment they verify creative. That changes the cost of compliance from “expensive auditing function” to “two extra checkboxes in an approval flow already happening”.

Operational best practice — what should be in every brief and every contract — boils down to:

- Contractual integration. Specify the exact hashtag (#Ad, #Sponsored, #CommercialPartnership) and its placement, not vague “comply with local laws” wording.

- Platform-native tooling. Use Instagram’s “Paid partnership with…” label as the floor. When using “collab” features, an in-caption hashtag is still required.

- Visual and verbal redundancy. Spoken acknowledgement in the first three seconds of video plus on-screen text for sound-off viewers.

- Above-the-fold placement. If a viewer must tap “read more” to find the disclosure, you’ve failed the ACCC’s prominence standard.

- Affiliate clarity. “Affiliate” means affiliate. Substituting #collab is a misrepresentation of the financial relationship.

For the brand argument that disclosure transparency is a creative asset rather than a compliance tax, see our companion piece on why bigger budgets can’t fix the two things they need to fix — ROI proof and audience trust both depend on it.

Why micro-creators carry the lowest brand-safety profile

The economic case for micro-influencers is well-rehearsed: 60% higher engagement, 20% more direct conversions, lower cost per post, more authentic community fit. The brand-safety case is less talked about and just as material.

Mega-influencer campaigns concentrate risk. A single creator carrying seven figures of paid endorsement means a single brand-safety incident — historic offensive post, current legal trouble, viral controversy — cancels the entire campaign with no recoverable budget. A distributed campaign across 20 micro-creators spreads that risk by an order of magnitude. One creator going off-brand is a 5% creative replacement, not a campaign-ending event.

Australian campaign data backs this up. Paramount Pictures’ Mission: Impossible run-club campaign in Australia used 21 carefully selected micro and mid-tier influencers to generate 325 pieces of content, reaching 3.1 million Gen Z Australians and contributing to 1.13 million ticket sales and $23.67 million AUD at the box office.11 That’s a textbook example of distributed-risk creator strategy delivering scale without the brand-safety concentration of a single celebrity endorsement.

For the strategic argument that volume of authentic creators beats one big spend — and the platform mechanics that make it operationally viable — read the blanket campaign thesis, which is the original positioning piece that the rest of the brand-side hub builds on.

The 2026 brand-safety operating standard

Pulling everything in this playbook together, the 2026 standard for an Australian brand running paid creator campaigns looks like this.

Pre-contracting.

- Every shortlisted creator passes a verification check on follower count, engagement rate, audience geography, and bot/pod patterns

- A web-intelligence safety scan runs on each finalist for past scandals, legal issues and controversial content

- Vertical-specific checks for TGA, ASIC and ABAC apply where relevant

- Creator’s age and audience-age data are verified for alcohol or age-restricted categories

Contracting.

- Specific disclosure clauses (hashtag, placement, platform-native tag, spoken acknowledgement)

- Termination rights for non-compliance with ACCC, AANA, TGA or ASIC mandates

- Prohibition on synthetic content and unauthorised AI-generated assets

- Deliverable spec including provenance metadata where applicable

Content review.

- Disclosure compliance is a gating criterion, not a post-hoc audit

- Comment moderation cadence agreed upfront for therapeutic-goods, financial-product or alcohol content

- Edit history retained on any reviewed content (the Photobook Shop precedent specifically penalised post-hoc editing of a published creator review)

Post-publication.

- Performance and risk monitoring

- Structured reviews fed back into the creator’s reputation record, so the next brand inherits the audit trail

A marketplace bakes most of those steps into the workflow. Built from scratch, it’s an entire compliance function. Built on top of an existing creator marketplace, it’s the default.

Frequently asked questions

What does brand safety actually mean in Australian influencer marketing in 2026?

Brand safety in 2026 is the discipline of keeping a brand legally compliant, financially protected and reputationally intact across every paid creator post. It covers ACCC consumer-law exposure, AANA and AiMCO disclosure rules, vertical regulators like the TGA and ASIC, fraud detection on followers and engagement, and AI-content vetting. The baseline assumption now is that the brand — not just the creator — is liable when something goes wrong.

Can the ACCC fine brands for an influencer’s undisclosed paid post?

Yes. In March 2026 the ACCC issued its first-ever financial penalty against a brand — $39,600 in infringement notices to Photobook Shop — for hidden influencer deals and editing a review to remove negative comments. The precedent is that liability for misleading endorsements now sits squarely on the brand commissioning the content, not only on the creator posting it.

Do gifted products and PR samples count as paid endorsements under AANA rules?

Almost always, yes. Both AANA Code of Ethics Section 2.7 and the AiMCO Influencer Marketing Code of Practice treat value-in-kind — gifts, PR samples, free trips, complimentary services — as equivalent to a paid engagement when the brand has any reasonable degree of control over the resulting content. The only narrow exception is a creator with zero prior or expected commercial relationship who posts purely out of personal affinity.

How do I know if a micro-influencer’s followers and engagement are real?

Look at three signals before you contract anyone. First, audience geography that matches their stated market — a Sydney lifestyle creator with 60% audience in Eastern Europe is almost certainly fake. Second, engagement velocity that builds steadily over 24–48 hours, not a vertical spike in the first hour followed by a flatline. Third, comment quality — strings of fire emojis and one-word praise are pod patterns, not real fans. Industry studies put fake-follower rates at 14% to 37% across the influencer population.

Are AI-generated or virtual influencers safe for brands to use?

Overt virtual personas like Lil Miquela are a transparent creative choice and disclose their nature. Covert synthetic personas designed to look human are the actual brand-safety problem because automated vetting often classifies them as real talent. Until AI-disclosure labelling is enforced reliably on TikTok and Instagram, brands should require backend platform analytics and a live video call as part of contracting — both of which a fully synthetic persona cannot satisfy.

What’s the safest way to handle disclosure on TikTok and Instagram Reels?

Use platform-native paid-partnership tagging plus an explicit hashtag in the caption (#Ad or #Sponsored, not #collab or #partner), spoken acknowledgement in the first three seconds of video, and on-screen text overlay for sound-off viewers. Keep the disclosure above the fold so a viewer never has to tap “read more”. The ACCC standard is that disclosure must be immediately obvious to the ordinary consumer.

Whose responsibility is it to monitor comments on therapeutic-goods or health-claim content?

The brand’s. The TGA treats brand-controlled social channels as advertising surfaces, which means an unmoderated user comment making a non-compliant health claim — for example, an unsubstantiated weight-loss outcome — is the brand’s breach to fix. In 2024–25 the TGA requested removal of more than 13,700 unlawful social-media advertisements. Active comment moderation is a compliance task, not an optional community-management one.

How does a creator marketplace reduce brand-safety risk versus DM-based outreach?

A marketplace bakes verification, fraud detection, contractual disclosure clauses, escrow payment, and content-approval gates into the workflow itself, so each campaign inherits compliance instead of building it from scratch. DM-based outreach skips every one of those checks unless the brand has built equivalent internal tooling — which most do not. On Mega Donkey, every creator passes AI verification before they can apply, and Safety Check AI screens for risk before any contract is signed.

Brand safety as the new operating standard

The Photobook Shop fine wasn’t an aberration. It was the regulator establishing that Australian Consumer Law applies the same way online as it does in a physical retail store. The fraud benchmarks are not improving — if anything, the rise of synthetic personas and AI-generated audiences is making them worse. And the verticals (therapeutic goods, financial products, alcohol) where the biggest individual penalties hit have already started enforcing.

The brands that come out ahead in 2026 are the ones that stop treating brand safety as a checklist owned by legal and start treating it as an operating standard owned by marketing. Verification at the floor. Safety screening before contracting. Disclosure as a feature of the creative, not a tax on it. Comment moderation as a scheduled compliance task. Reputation that follows the creator from one campaign to the next.

Ready to run influencer campaigns with verification, fraud detection and disclosure compliance built in? See our brand pricing — every campaign on Mega Donkey starts with verified creators by default.

Sources

Footnotes

-

ACCC media release, PhotobookShop pays penalties for influencer reviews, 2026. https://www.accc.gov.au/media-release/photobookshop-pays-penalties-for-influencer-reviews ↩ ↩2

-

ACCC, 2024–25 Compliance and Enforcement Policy and Priorities. https://www.accc.gov.au/system/files/compliance-enforcement-policy-priority-2024_0.pdf ↩

-

ACCC media release, ACCC social media sweep targets influencers, 2023. https://www.accc.gov.au/media-release/accc-social-media-sweep-targets-influencers ↩

-

AANA, Code of Ethics. https://aana.com.au/self-regulation/code-of-ethics/ ↩

-

DLA Piper analysis of the AiMCO Code of Practice, Influencer Marketing Best Practice in Australia — the AiMCO Code of Practice. https://www.dlapiper.com/insights/blogs/consumer-food-and-retail-insights/2023/influencer-marketing-best-practice-in-australia-the-aimco-code-of-practice ↩

-

TGA media release, TGA releases updated social media advertising guidance to support improved compliance. https://www.tga.gov.au/news/media-releases/tga-releases-updated-social-media-advertising-guidance-support-improved-compliance ↩

-

ASIC media release 26-081MR, ASIC continues finfluencer crackdown alongside global regulators, 2026. https://www.asic.gov.au/about-asic/news-centre/find-a-media-release/2026-releases/26-081mr-asic-continues-finfluencer-crackdown-alongside-global-regulators/ ↩

-

Business.com, How to Identify Influencer Marketing Fraud. https://www.business.com/articles/influencer-marketing-fraud/ ↩

-

Influencer Marketing Hub 2026 industry report, summarised at https://influencermarketinghub.com/deepfake-ai-generated-ugc/ and corroborated by Digital Applied and SociaVault audits. ↩

-

Commonwealth Bank, How good are Australians at spotting an AI-powered deepfake scam?, 2026. https://www.commbank.com.au/articles/newsroom/2026/01/can-australians-spot-deepfake-scams.html ↩

-

AiMCO case study, Australia’s top influencer campaigns — Paramount Pictures Mission: Impossible run club campaign by HELLO. https://www.mediaweek.com.au/aimco-unveils-case-studies-spotlighting-australias-top-influencer-campaigns/ ↩

Ready to get started?

Launch your first campaign or join as a creator today.